Category: Technology

-

UniFi update blocking Tor

TLDR: UniFi Network Application 7.4.162 may start blocking Tor, even though the corresponding option is disabled.

I just spent a couple hours troubleshooting my Bitcoin Lightning node because suddenly (over night) all channels where down. After a while I realized that, while the Bitcoin node was still connected, it only connected over clearnet and no longer Tor.… Continued

-

Apple didn’t get it to work

Apple “decided to not proceed with the proposal for a hybrid client-server approach to CSAM detection for iCloud Photos from a few years ago,” and “concluded it was not practically possible to implement without ultimately imperiling the security and privacy of our users.”… Continued

-

Entropy

Here is another reminder that using strong entropy (randomness) for crypto wallets is a must, otherwise people are able to guess your private keys and steal all assets.

Klever Alert: External Security Incident -

Fool with a Tool

Impressive progress using an LLM to support smart contract auditors with their work.

Worth repeating: good people will be able to do more good stuff with new tools, but a fool with a tool is still a fool – they’ll just produce a lot more foolishness faster than before.… Continued -

Meta Device

Meta is selling a VR experience while Apple is selling a device that can do anything – who’s playing the open platform game now? But also: which model will win (if any)? Jury is still out there.

-

Razr again

I’m still tempted to get the Motorola Razr 40 Ultra when it’s available in Switzerland. I really prefer small phones, but also love the luxury of a bigger screen for all occasional game or movie.

It just seems to have found the perfect formula for this.… Continued

-

Limits of DeFi

Fully automated DeFi is fine as long as it’s within defined boundary conditions. Outside those, you rely on manual interventions – same as in TradFi.

Good for these folks to have a DAO process that enables this, even though it’s crude and likely wasn’t intended for this use case 🤷

BNB Chain team prepares to step in to prevent massive Venus Protocol liquidation -

Zuck about Vision Pro

Remember Meta? They approach AR/VR fundamentally different from Apple, are solving a different problem. Will be interesting to watch!

Here’s what Mark Zuckerberg thinks about Apple’s Vision Pro

Apple finally announced their headset, so I want to talk about that for a second.… Continued

-

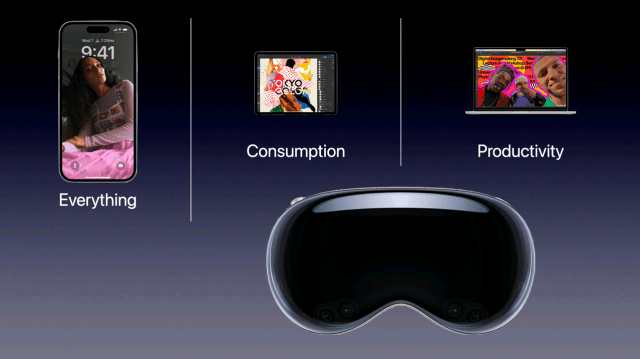

Production & Consumption

Ben Thompson can ask and answer this about Apple Vision Pro much better than I, go read it!

What exactly is this useful for? Who has room for another device in their life, particularly one that costs $3,499?

Apple Vision Stratechery